About the Customer

Stage5 is an AI-native SaaS innovator redefining how businesses interact with software. Stage5’s mission is to move routine and complex operational work out of inboxes and ad-hoc processes and into autonomous AI agents. The platform enables companies of any size to embed AI into core workflows—driving growth, reducing operational costs, and offloading tasks that were historically too difficult or too expensive to automate. Stage5 supports a hybrid operating model of software, AI, and humans: agents design and execute workflows, while people provide oversight when needed. Built as an AI-native SaaS foundation, Stage5 is hyper-personalized by design—each customer’s solutions are tailor-made through AI-driven solution engineering on top of its automation and AI platform. In addition, Stage5 delivers conversational agents that let employees and end customers interact with the system through their preferred communication channels, meeting users where they already work rather than forcing adoption of a new interface.

Unlike traditional SaaS platforms that rely on static user interfaces, Stage5 was built from the ground up as a conversational-first system where AI agents are not an add-on feature, but the primary operating layer. The company’s long-term vision is to build a self-driving business operations platform in which intelligent agents continuously design, execute, and optimize workflows across systems.

Stage5 operates as a secure, multi-tenant SaaS solution deployed entirely on AWS.

Customer Challenge

To realize its vision, Stage5 required a production-grade Generative AI architecture capable of securely operating in a multi-tenant SaaS environment at scale. The platform needed to dynamically select the most appropriate foundation model for each task based on reasoning complexity, latency, and cost efficiency, while abstracting this technical complexity away from end users.

Because Stage5 agents are designed to execute real operational workflows — including data aggregation, document handling, coordination across systems, and business process automation — the architecture required strict tenant isolation, secure third-party integrations, full auditability of AI-generated actions, and governance mechanisms to reduce hallucinations and unintended behaviors.

Building a truly AI-native operational layer demanded enterprise-level security, observability, scalability, and DevOps maturity from day one.

The Solution

ProfiSea partnered with Stage5 to architect and productionize a secure, scalable Generative AI platform on AWS.

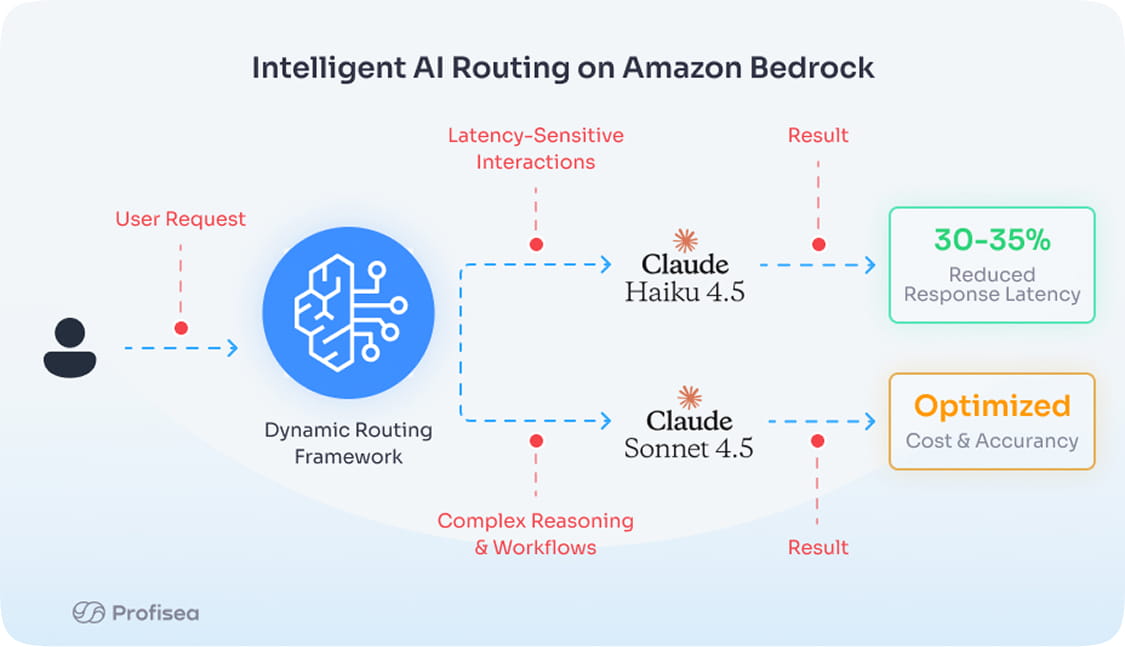

The platform leverages Amazon Bedrock to access Anthropic Claude foundation models. Claude Sonnet powers complex reasoning and workflow orchestration, while Claude Haiku supports latency-sensitive conversational interactions. A dynamic routing framework selects the optimal model based on reasoning depth, response speed, and cost considerations, optimizing both performance and efficiency while shielding end users from model management complexity.

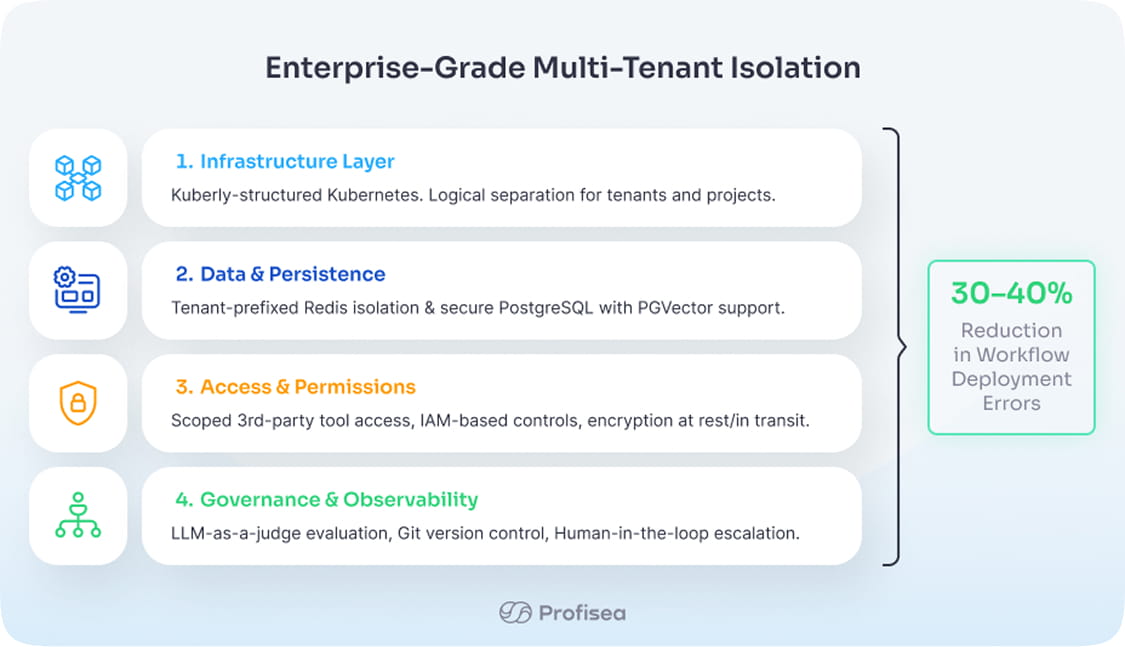

The application is deployed on Kubernetes within AWS and was engineered as a multi-tenant architecture from inception. To accelerate platform engineering maturity and ensure operational consistency, the Kubernetes environment was structured using ProfiSea’s platform engineering solution, Kuberly, which provides standardized infrastructure patterns, CI/CD integration, and environment isolation tailored for SaaS-scale workloads.

Logical isolation separates tenants, projects, and development environments. IAM-based access controls, encrypted communication, and controlled API access protect data in transit and at rest. Short-term conversational context is managed via Redis with tenant-prefixed isolation, and persistent data is stored in PostgreSQL, with an architecture designed to support PGVector-based retrieval for advanced RAG capabilities.

To uphold security and responsible AI principles, Stage5 implements scoped tool access rather than broad third-party permissions. Agents are granted tightly controlled capabilities that limit access to specific resources. All workflows are version-controlled in a Git-based system, enabling full traceability, controlled publishing, and structured change management. Human-in-the-loop escalation steps ensure oversight before executing sensitive actions.

Comprehensive observability includes infrastructure monitoring via Grafana, AI interaction logging, automated agent acceptance testing, and LLM-as-a-judge evaluation workflows. These mechanisms allow Stage5 to continuously improve agent reliability and maintain high operational integrity.

Results and Benefits

Stage5 successfully launched a production-grade AI agent platform on AWS, establishing a strong technical foundation for its mission of autonomous business operations.

Through dynamic model routing via Amazon Bedrock, the platform reduced average conversational response latency by approximately 30-35% and cost by approximately 60% during internal benchmarking while improving model usage efficiency by directing lightweight tasks to optimized models.

The implementation of scoped third-party integrations, IAM-based access controls, encryption, and tenant-level isolation strengthened the platform’s security posture and aligned it with AWS security best practices for multi-tenant SaaS architectures.

Structured deployment processes reduced workflow deployment errors by approximately 30–40%, improving stability in the shared multi-tenant environment. Internal validation workflows, including LLM-as-a-judge testing and acceptance criteria evaluation, reduced hallucination-related workflow failures by roughly 20–25% during pilot validation phases.

In early operational use cases, AI-driven workflow automation reduced manual coordination time by approximately 30–50%, accelerating invoice handling, contract workflows, and cross-system data aggregation.

With its AWS-based architecture, Stage5 is positioned to scale securely, onboard enterprise customers, and continue advancing toward its vision of fully AI-driven operational systems.

About ProfiSea

ProfiSea is an AWS Premier-Tier consulting partner specializing in Kubernetes architecture, DevOps modernization, FinOps optimization, Platform Engineering and Generative AI solutions. The company enables technology innovators to transform ambitious AI visions into secure, scalable, and production-ready platforms on AWS.