Microservices Vocabulary. Part 2: Service Registry, Circuit Breakers, CORS, API Gateways

Everything in the microservices-based app is carefully crafted and invisibly connected, so if one service fails, it can potentially bring the domino effect throughout the whole system. This is dangerous for your application. So, how to protect your product from a failure of network or single service cascading to other microservices?

The Concept of Distributed Services

In Microservices architecture, the services are distributed, but sometimes they have to work closely with each other to handle specific requests. However, the number of service instances and their location in the network change dynamically. The problem, though, is that to collaborate on request processing, one service has to invoke another one synchronously. This increases the likelihood that the service being called upon to help is essentially unavailable due to various reasons.

At the same time, while waiting for the reply, the calling service consumes the threads – valuable app’s resources. This can result in the system’s resources stretched too far, making the caller inaccessible for other requests. Consequently, when you build an app where microservices have to communicate via a network, you should keep in mind several extra patterns that will help to preserve your system from failing.

Service Registry

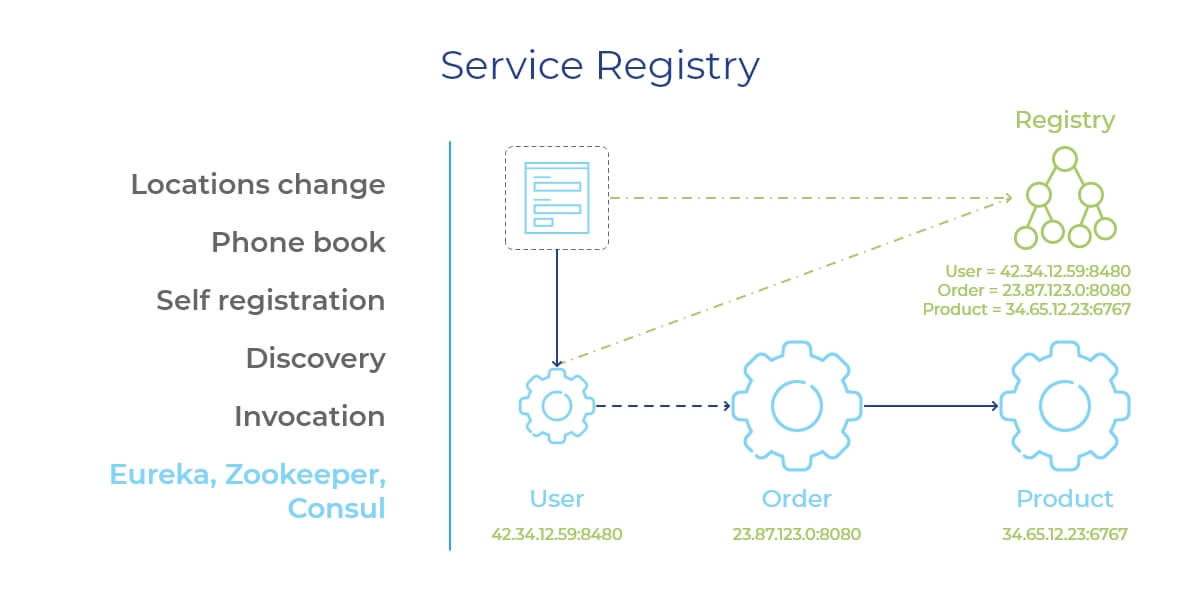

To discover the microservice’s constantly changing network location, the client needs a service registry. It’s called service discovery – the act of finding an immediate location of the service instance. And a service registry contains all locations of all services in the system, allowing the client-side to search the needed service by its logical name.

These requests can be both:

- Synchronous – the caller halts until the reply comes back

- Asynchronous – some service invocates the caller with the request for an action that can be performed in parallel while waiting for a response.

One drawback of this approach is that the client has to discover the service instances’ coordinates. Also, both the client and the microservice have to be free of other synchronous calls during their direct interaction, which reduces their availability for other communication requests.

- Messaging – is an asynchronous, more complex inter-service communication protocol. The examples of message tools include Apache Kafka and RabbitMQ.

A self-explanatory term since it means that services exchange messages using a certain broker/channel as an interlink. In detail, to interact with each other, services send their messages to the broker (see the picture). All microservices (subdomains) interested in communicating with these calling services subscribe to the broker to receive the messages. Upon receiving the info encoded in the messages, the services update their own state.

This approach results in such benefits as:

- Asynchronous messages support the principle of the loosely-coupled structure of microservices.

- They improve the availability of services as the broker/channel layer queued up the messages until the receiver is ready to handle them.

- Messaging protocol supports various communication patterns: request-reply, request-synchronous response, publish-subscribe, publish-asynchronous response, notifications, and more.

How does it work? To end up in the registry, a microservice does self-registration of its network location when it starts working and deregisters on shutdown. Using the registry as a phone book, the client discovers the service instance location by sending a query. Then it calls the needed microservice, making an appropriate request. You might have heard about such ready-made service registries as Redis, Apache ZooKeeper, Netflix Eureka, or Consul.

Circuit Breakers

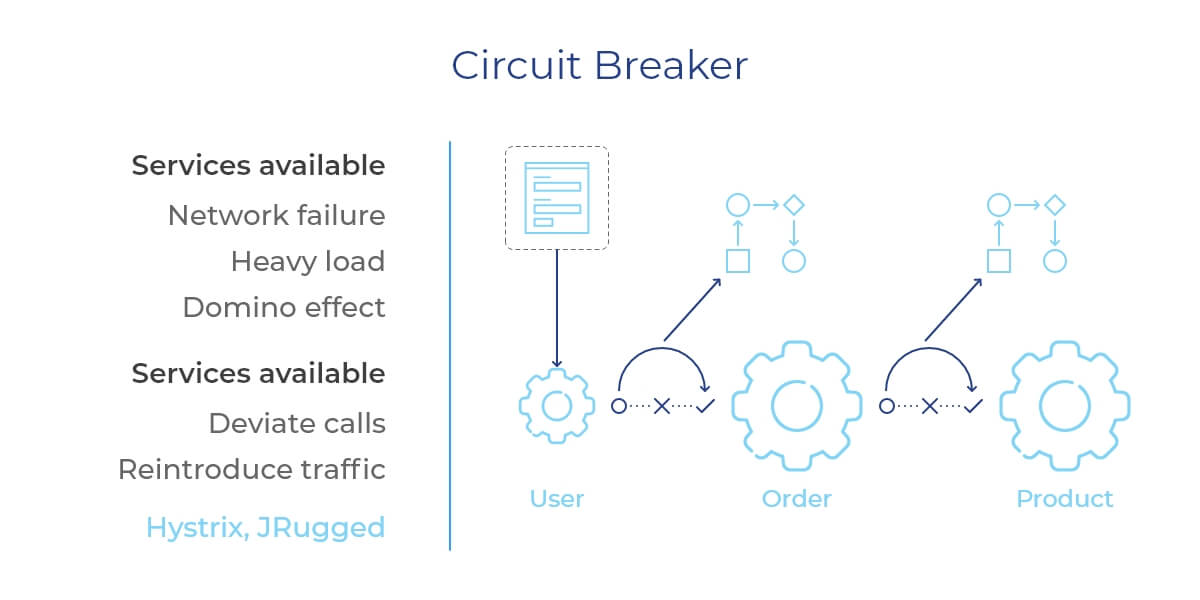

As we’ve discussed earlier, Remote Procedure Invocation is one of the two most popular service-to-service communication protocols in Microservices architecture, and it has no inter-service message broker. So, if you use it in your app, to receive the request, a service has to be free of other tasks and available for cooperation. Of course, as evidenced in practice, it’s not always possible. Any microservice can end up being unavailable because of a network failure, the heavy load, or else. The failure of the initial call can trigger failures of the other systems through the entire app, like the domino effect we’ve mentioned above.

How to solve this puzzle? By implementing a circuit breaker – a design pattern that lets your app to perform remote invocation of the microservices through proxies. Their structure is akin to an electrical circuit breaker. When the number of consecutive tries to invoke a service surpasses a pre-set limit, the circuit breaker stops reaching out to that remote service, taking a certain timeout.

Simply put, the circuit breaker deviates the calls if some services are unavailable. After the timeout ends, the circuit breaker resumes its work allowing the selected services to receive a certain number of calls again. Only when the services successfully respond to those client requests, the circuit breaker gets into its normal operation mode. Then, it slowly reintroduces the usual requests’ traffic. Netflix Hystrix, pybreaker, and JRugged are famous examples of circuit breakers.

The doubt?Your microservices easily handle the failure of the invoked services.

The benefit? Establishing the timeout value is a challenging task where you have to avoid ending up with false positives or excessive latency.

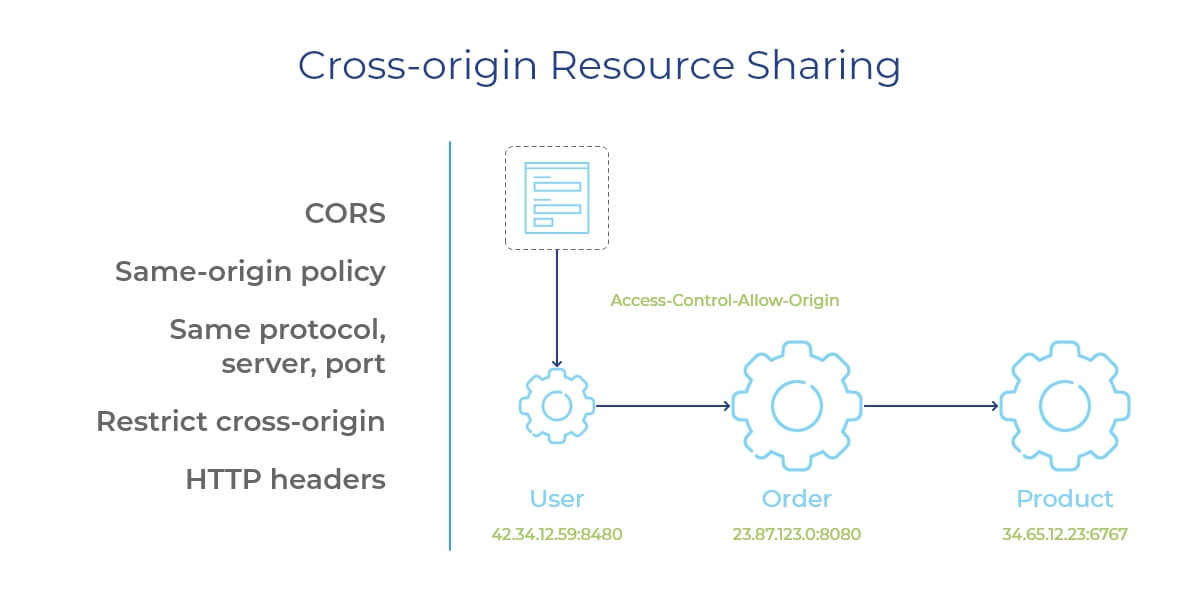

Cross-Origin Resource Sharing (CORS)

This one is also for putting the service-to-service calls on hold. CORS greatly helps when you handle the microservices located on different servers. There are technologies, like HTTP, for instance, that have the restrictive same-origin policy. Meaning that to interact with each other, the resources must be hosted on the same server, i. e. have the same origin.

What is an origin? In short, it’s a combination of protocol, port number, and host. However, you already know that a microservices-based app stores the services in different locations. And when they have to communicate with each other? This is what we call crossing the origin. As browsers restrict scripted cross-origin HTTP requests due to the security reasons, your microservices will need additional HTTP headers (Access-Control-Allow-Origin) to grant the client access to selected resources stored on a different server. These are the essentials of the cross-origin resource sharing pattern.

2. APIs and Contracts. Just business!

Okay, now you probably wonder how exactly each engineering team invokes some external microservices? Well, earlier, we’ve mentioned the API’s role in the communication process. Here we also add another player – the contracts. Just as any business deal, a call, in our case, is sealed with a contract. Such a contract allows the microservices to expose the full set of API’s functions.

For instance, your app’s order processing microservice simultaneously uses two APIs – one for creating new shipment orders, the other one for following the existing orders till they’re successfully delivered. So, how the other system’s services know what is being used and how to invocate it? The microservice issues a certain contract into the system. The other microservices will receive it when needed, read it, and establish a process of remote API’s invocation.

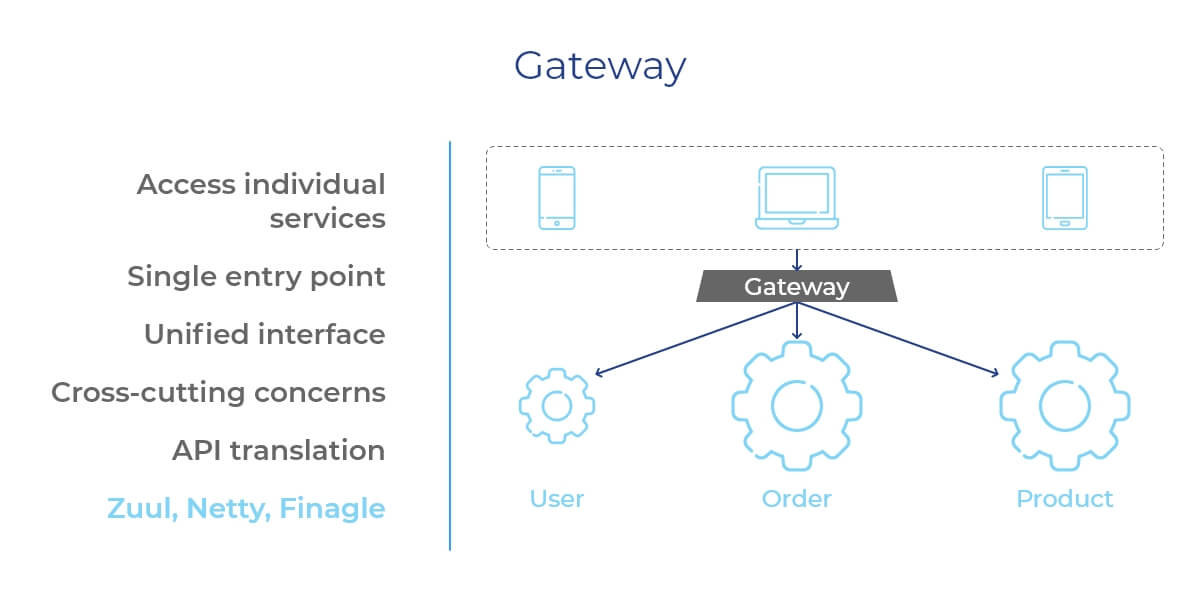

API Gateways

Remember, we’ve discovered that all the elements and components of user interfaces in the microservices ecosystem must be aggregated to form a single app? Still, each component/element must access a particular service. How to achieve it? For instance, you can code the direct client‑to‑microservice connection between the components and your services, but this will raise the need for each request to deal with cross-cutting issues like security.

A way out is to set up an API gateway as a single entry point for all clients. In other words, each client is going to have a unified interface to access all microservices. Different devices request for different data, so rather than providing some universal, all-in-one API, the gateway exposes different, tailored APIs for each client. The gateways are responsible for request routing, protocol translation, and composition.

Such API gateways have two ways of processing the client requests:

- Proxy/route some of the requests to the service pre-set to handle them;

- Fan out other calls to a number of services (requests such as authentication, authorization, service discovery via querying the Service Registry).

- The popular gateway solutions for microservices architecture are Spring Cloud Gateway, Netflix Zuul, NGINX Plus, Netty, and Finagle.

Afterword

We keep diving deeper and deeper into the technical terms of the Microservices world. Understandably, it might be complicated or confusing, so don’t hesitate to reach out to us to get the needed answers. Our technical experts can help you with free consultations and investigate your project to help you decide whether the microservices architecture is suitable for your business goals or not.

Stay tuned, more fun is coming!